Exemplar-based Recognition of

Human-Object Interactions

Jian-Fang Hu1, Wei-Shi Zheng1, Jianhuang Lai1, Shaogang Gong2, and Tao Xiang2

1Sun Yat-sen University, China 2Queen Mary, University of London

Introduction

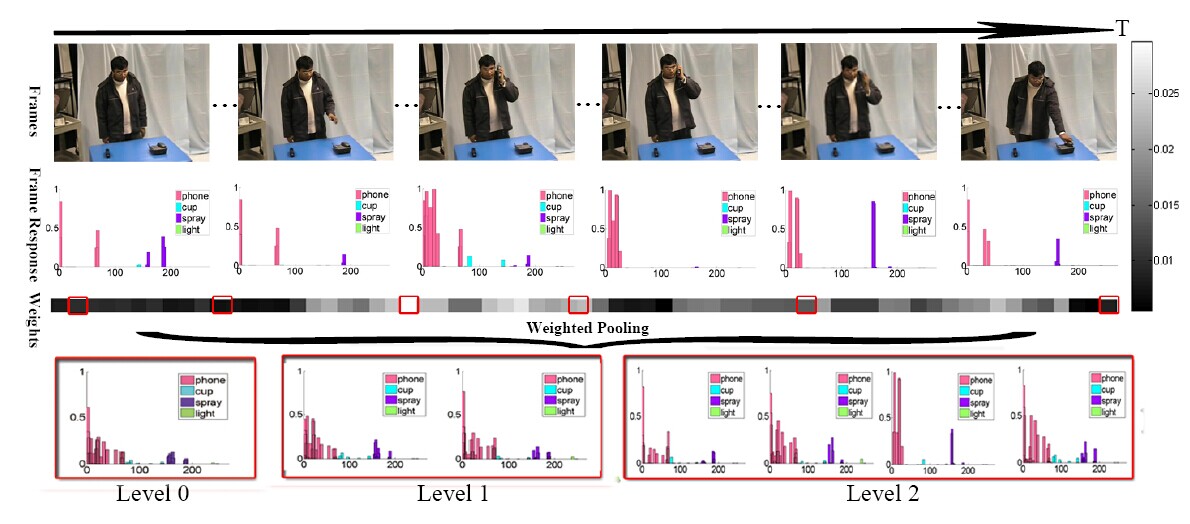

Human action can be recognised from a single still image by modelling human-object interactions (HOI), which infers the mutual spatial structure information between human and the manipulated object as well as their appearance. Existing approaches rely heavily on accurate detection of human and object and estimation of human pose; they are thus sensitive to large variations of human poses, occlusion and unsatisfactory detection of small size objects. To overcome this limitation, a novel exemplar-based approach is proposed in this work. Our approach learns a set of spatial pose-object interaction exemplars, which are probabilistic density functions describing spatially how a person is interacting with a manipulated object for different activities. Specifically, a new framework consisting of an exemplar-based HOI descriptor and an associated matching model is formulated for robust human action recognition in still images. In addition, the framework is extended to perform HOI recognition in videos, where the proposed exemplar representation is used for implicit frame selection to negate irrelevant or noisy frames by temporal structured HOI modelling. Extensive experiments are carried out on two image action datasets and two video action datasets. The results demonstrate the effectiveness of our proposed methods and show that our approach is able to achieve state-of-the-art performance, compared with several recently proposed competitors.

Download

Please download the dataset and features using the links below:

Sports Image set (300 HOI images): PHOW features & Label Info

PPMI Image set (2400 HOI images): PHOW features, Label Info, Annotations (coming soon)

CMU-Gupta Dataset (54 HOI video clips): Image sequences & Annotations

SYSU Dataset (119 HOI video clips): VideoData & Annotations

Citation

Please cite the following papers if you use the data in your research:

- Jian-Fang Hu, Wei-Shi Zheng, Jianhuang Lai, Shaogang Gong, and Tao Xiang, “Recognising Human-Object Interaction via Exemplar based Modelling”, ICCV, 2013. Darling Harbour, Sydney. PDF BibTex

- Jian-Fang Hu, Wei-Shi Zheng, Jianhuang Lai, Shaogang Gong, and Tao Xiang, “Exemplar-based Recognition of Human-Object Interactions”, IEEE trans on Circuits and Systems for Video Technology (TCSVT), 2015. PDF BibTex